Article

Turning Your Codebase Into Stakeholder-Ready Documentation with AI

Most teams treat documentation as a separate activity from development.

Code is written. Features ship. Then later… someone asks for:

- Security controls

- Architecture documentation

- Data flow diagrams

- Audit evidence

- Risk assessments

And the team scrambles to reconstruct what already exists.

But what if the documentation is already there?

In the codebase itself.

In conversations you’ve had with AI while building features.

If you treat your codebase and AI interactions as a living work diary, you can generate stakeholder-ready documentation on demand.

Summary

Treat your codebase, PRs, commit history, and AI conversations as a structured work diary so AI can synthesize accurate, stakeholder-ready documentation on demand. AI can parse repositories and reasoning trails to produce drafts (security controls, data flows, architecture) and, critically, surface gaps that indicate product or clarity issues. Implement this by structuring the codebase, generating periodic AI-driven reports, and using discrepancies as a forcing function for improvements. The result is reduced reactive overhead, preserved institutional memory, and tighter alignment between engineering work and stakeholder visibility.

The Problem: Documentation Is Usually Retrospective

Most stakeholder documentation is created:

- During audits

- Before enterprise deals

- After security reviews

- When compliance is requested

- During due diligence

It’s reactive.

Engineers then have to:

- Re-explain architectural decisions

- Reconstruct data handling logic

- Translate implementation details into control language

- Fill in gaps they didn’t realize existed

This is expensive and disruptive.

The irony?

The knowledge already exists in development artifacts.

The Shift: Treat Development as a Structured Diary

Think about what modern teams already produce:

- Source code

- Infrastructure-as-code

- CI/CD configurations

- Pull request discussions

- Architectural Decision Records (ADR)

- Slack or email design debates

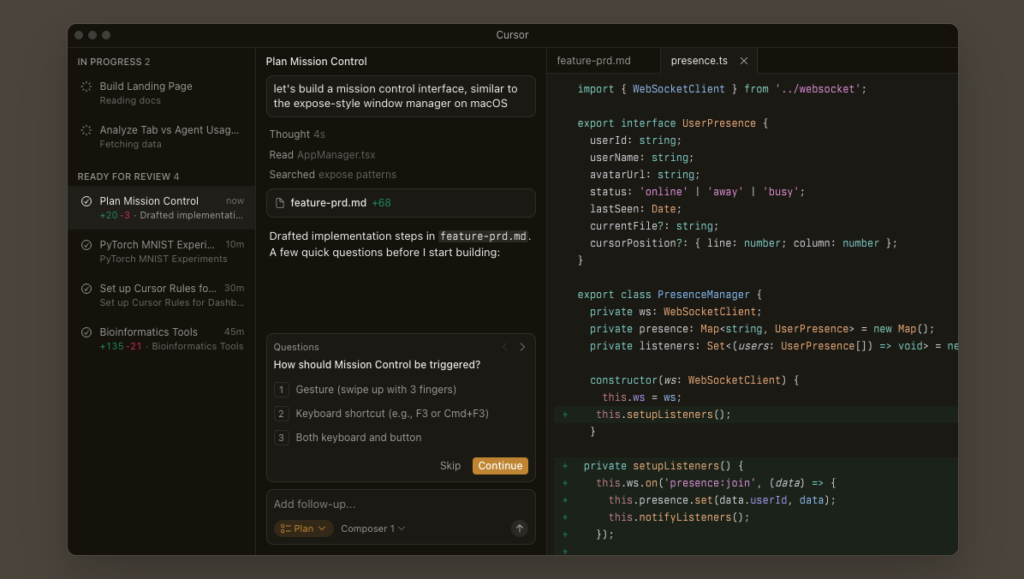

- AI-assisted coding sessions

When engineers use tools like ChatGPT or other AI-assisted coding workflows, they often:

- Explain their intent

- Ask about tradeoffs

- Clarify security implications

- Refine edge cases

- Iterate on architectural decisions

Those conversations are not just implementation helpers.

They are reasoning trails.

That reasoning trail is exactly what stakeholders ask for.

How AI Changes the Equation

AI can now:

- Parse your repository structure

- Summarize data flows

- Infer security controls from code patterns

- Extract infrastructure assumptions

- Convert implementation logic into control narratives

For example, AI can generate:

- A draft Security Controls document

- A data handling overview

- An access control explanation

- An architecture summary

- A threat consideration checklist

Not as hallucinated theory, but grounded in actual implementation.

This does two important things.

1. It Produces Documentation On Demand

Instead of writing from scratch, you prompt:

“Based on this repository and infrastructure setup, generate a stakeholder-ready summary of security controls.”

What comes back is often 70–80% complete.

Engineers review, refine, and adjust.

Time to first draft drops dramatically.

2. It Surfaces Gaps

This is the more interesting part.

When AI attempts to assemble a coherent narrative, it will sometimes expose:

- Missing encryption assumptions

- Unclear access boundaries

- Unvalidated input paths

- Hardcoded configuration risks

- Logging blind spots

Because documentation forces coherence.

If the story cannot be told cleanly, that’s often a product gap.

The act of generating documentation becomes a diagnostic tool.

Code + AI Conversations = Institutional Memory

If you go further and include AI interaction logs in your workflow, something powerful happens.

When developers:

- Explain requirements to AI

- Debate tradeoffs

- Refine constraints

- Ask about security implications

They are externalizing intent.

That intent is usually lost over time.

But if stored intentionally, it becomes:

- Decision traceability

- Rationale history

- Design context

- Justification during audits

In other words, your AI conversations become part of your system of record.

Practical Implementation Approach

If you want to operationalize this idea, start simple.

Step 1: Ensure Your Codebase Is Structured

- Clear folder organization

- Explicit environment configurations

- Infrastructure-as-code

- Descriptive PR titles

- Minimal inline comments explaining non-obvious logic

Garbage in, garbage out still applies.

Step 2: Periodically Generate Reports

Every sprint or milestone, prompt:

- “Summarize security controls implemented in this repository.”

- “Describe data flow from user input to persistence.”

- “List potential risk areas based on current architecture.”

Save those outputs.

Treat them as living drafts.

Step 3: Compare Output to Expectations

Where the AI struggles:

- Add clarity to the code

- Formalize assumptions

- Write missing controls

- Add validation

- Improve naming

Documentation generation becomes a forcing function for clarity.

Why This Matters for Engineering Leaders

This approach changes documentation from:

Reactive overhead to Continuous reflection

It also:

- Reduces audit friction

- Speeds up enterprise sales support

- Improves architectural discipline

- Preserves institutional knowledge

- Helps newer engineers understand intent faster

Most importantly, it aligns engineering work with stakeholder visibility without duplicating effort.

A Different Way to Think About It

Instead of asking:

“How do we write better documentation?”

Ask:

“How do we structure development so documentation can be generated?”

If your system can describe itself clearly, you likely built it clearly.

If it cannot, that’s useful information.

Final Thought

We often think AI accelerates coding.

But one of its more practical enterprise uses might be this:

Turning everyday development artifacts into structured, stakeholder-ready narratives.

Not as marketing.

Not as paperwork.

But as a reflection of what the system actually is.

If your codebase had to explain itself tomorrow… would it be ready?

Q&A

Question: What does “treat development as a structured work diary” actually mean? Short answer: It means recognizing that the artifacts teams already create—source code, infrastructure-as-code, CI/CD configs, pull request discussions, ADRs, design debates in Slack/email, and AI-assisted coding conversations—capture intent, tradeoffs, and security considerations. When you preserve these reasoning trails alongside the code, AI can synthesize them into clear narratives for stakeholders, turning everyday work into living documentation and institutional memory.

Question: How does AI turn our codebase into stakeholder-ready documents? Short answer: AI can parse repository structure, summarize data flows, infer security controls from code patterns, and extract infrastructure assumptions, then convert implementation details into control narratives. From this, it can draft security controls, data handling overviews, access control explanations, architecture summaries, and threat checklists—typically 70–80% complete. Engineers review and refine the drafts, dramatically reducing time to the first usable version.

Question: Why is AI-generated documentation also a diagnostic tool? Short answer: When AI struggles to produce a coherent story, it often highlights real product or clarity gaps. Typical signals include missing encryption assumptions, unclear access boundaries, unvalidated inputs, hardcoded configuration risks, and logging blind spots. The need to “tell the story” forces coherence; if it can’t be told cleanly, that points to places where the system or its clarity needs improvement.

Question: What are the practical steps to implement this approach? Short answer:

- Ensure the codebase is structured: clear folders, explicit environment configs, infrastructure-as-code, descriptive PR titles, and minimal inline comments for non-obvious logic (garbage in, garbage out still applies).

- Generate reports periodically (each sprint/milestone) with prompts like “Summarize security controls” or “Describe data flow from input to persistence,” and save them as living drafts.

- Compare outputs to expectations and act where AI struggles: clarify code, formalize assumptions, add validations and missing controls, and improve naming.

Question: What outcomes can engineering leaders expect? Short answer: Documentation shifts from reactive overhead to continuous reflection, reducing audit friction, speeding enterprise sales support, improving architectural discipline, preserving institutional knowledge, and helping new engineers grasp intent faster. Most importantly, it aligns day-to-day engineering work with stakeholder visibility without duplicating effort.