Article

AI is Becoming Infrastructure…and AI Safety is Becoming a Default Requirement

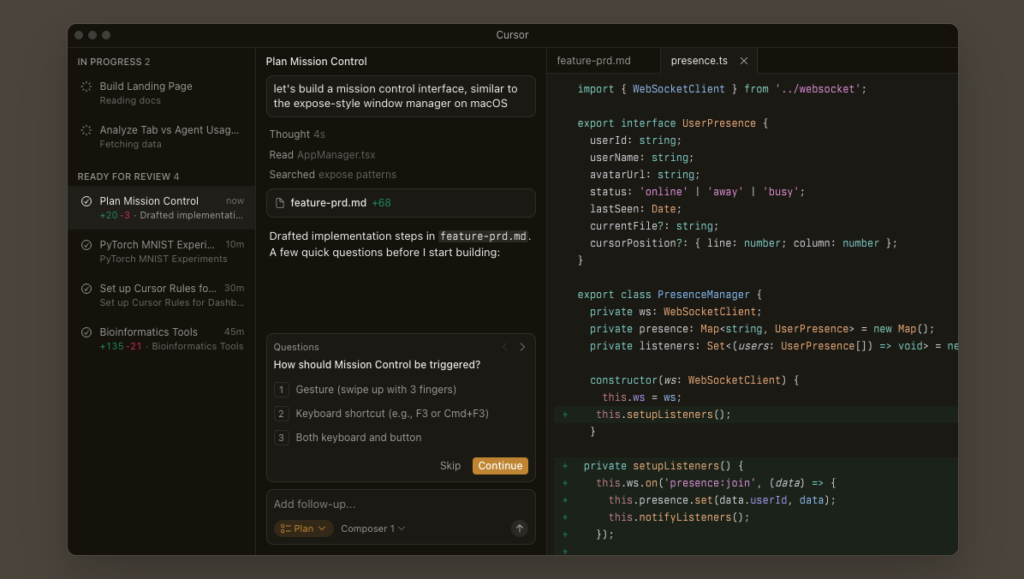

A lot of teams still talk about “adding AI” like it’s just another feature.

But once you embed a chatbot or generative assistant into a real product, you’ve effectively launched a dynamic content system. Not a widget.

And dynamic content systems come with new expectations:

- Who can access it… especially minors?

- What happens when it produces harmful or sensitive output?

- Can you demonstrate reasonable safeguards?

- Can you adapt quickly when regulations shift?

AI safety is moving from “nice to have” to a baseline requirement, and strategic processes are critical.

A summary of AI safety

Regulators in the UK, US, and EU are converging on tighter, default-first safety expectations for AI, especially chat-based systems. Because chat is probabilistic and easily steered into sensitive areas, teams must treat it as a live dynamic content system with age-safety design, real-time guardrails, monitoring, and clear governance. Building “safety by default” into architecture early reduces retrofit costs and becomes a competitive advantage, making operational compliance and adaptability the real moat. Leaders should prioritize age access, enforcement beyond prompts, audit evidence, rapid policy updates, and ownership of ongoing safety governance.

The regulatory direction is becoming clearer

The UK is a useful example of where this is heading.

Prime Minister Keir Starmer recently signaled greater powers to regulate online access, with discussions that include tightening AI chatbot safety rules alongside potential restrictions for under-16s on social media.

The UK government has also been explicit that “no platform gets a free pass” under its broader online safety push (UK Government).

Under the UK Online Safety Act framework, services likely to be accessed by children are expected to conduct children’s access assessments and implement highly effective age assurance in certain context

And this isn’t UK-only.

- In the US, COPPA already governs online services directed at children under 13, focusing on parental consent and data handling.

- California’s Age-Appropriate Design Code pushes privacy and safety by default for minors under 18.

- The EU AI Act introduces transparency requirements, including obligations to inform users when interacting with certain AI systems.

Different jurisdictions. Different scopes. Same pattern.

More scrutiny.

More accountability.

More expectation that safety is built into the system itself.

Chat changes the risk profile

Traditional software is mostly deterministic.

Chatbots are probabilistic. Users can pivot conversations into sensitive territory in seconds. Even if your product isn’t “about” those topics, your assistant might still be asked.

When you ship chat, you’re operating a live conversational system.

That requires thinking beyond prompts and model tuning:

- Explicit age-safety design

- Guardrails that work in real time

- Monitoring and logging infrastructure

- Escalation paths for edge cases

- Clear ownership between product, legal, and engineering

These are architectural decisions, not cosmetic add-ons.

“AI safety by default” is becoming the design constraint

Regulators increasingly emphasize default protections rather than optional disclaimers.

For product teams, that translates into real design tradeoffs:

- Age verification flows that don’t destroy onboarding conversion

- Input/output filtering that doesn’t degrade user experience (UX)

- Logging that preserves privacy while enabling accountability

- Rapid policy updates that don’t require full redeployments

If you design these late, you end up retrofitting them across your entire stack.

If you design them early, they become part of your competitive advantage.

The moat isn’t the model

Most teams can integrate an LLM.

Fewer can ship a chatbot that:

- Holds up under real-world misuse

- Survives regulatory scrutiny

- Adapts quickly as guidance evolves

- Provides evidence of controls and risk mitigation

That “boring compliance layer” is becoming the moat.

Not because it’s flashy.

But because rebuilding under regulatory pressure is far more expensive than designing responsibly from the start.

What leaders should be asking about AI safety right now

If you’re investing in AI-powered features this year, especially chat-based ones, expand your evaluation criteria.

Ask:

- How are we handling age-safety and youth access?

- What are our enforcement points beyond system prompts?

- What monitoring and audit evidence can we produce?

- How quickly can we adapt if requirements tighten?

- Who owns ongoing safety governance?

Shipping the chatbot is one milestone.

Shipping it responsibly… and defensibly… is another.

The regulatory signals from the UK and elsewhere are not isolated events. They’re indicators of a structural shift.

AI is no longer experimental.

For businesses across the spectrum, it’s becoming infrastructure.

And infrastructure comes with obligations.