Podcast

The Future Of Enterprise AI

In this episode of “The Future Of,” Jeff Dance interviews Douwe Kiela, CEO and co-founder of Contextual.ai and co-inventor of the RAG (Retrieval Augmented Generation) technique. They discuss the evolution and future trajectory of enterprise AI, emphasizing the importance of context engineering over simple prompt engineering, and explore the challenges and opportunities associated with integrating language models in complex enterprise environments. The conversation covers current adoption trends, the value of multi-agent systems in AI, and the necessity for businesses to focus on both cost savings and new revenue generation. Ethical considerations and the broader societal impact of advanced AI technologies are also examined.

Podcast Transcript:

Jeff Dance: In this episode of The Future of, we’re joined by Douwe Kiela, CEO and co-founder of Contextual AI, to explore the future of enterprise AI.

Jeff Dance: By way of introduction, Douwe is the CEO and co-founder of Contextual AI. As I mentioned, he’s also the co-inventor of the RAG technique, which stands for Retrieval Augmented Generation. We’re going to talk about that. He’s a Stanford professor and has historically been a research lead at Meta. He’s headed up AI research at Hugging Face, founded a digital agency, and also earned a PhD in computer science from Cambridge, with an undergraduate degree in philosophy. So he has quite the background as we think about enterprise AI. Douwe, tell us a little bit more about your journey in AI. Given how fast things have been changing, it seems like you picked everything perfectly to lead up to where we are today. Tell us a bit more.

Douwe Kiela: Actually, it’s not entirely true that I have a PhD in both philosophy and computer science. I have a PhD in computer science and an undergraduate degree in philosophy. That was my starting point. I was just very interested in the mind, language, and things like that. One thing led to another, and that’s how I got into AI before it was cool. Then I joined Facebook AI Research very early on, when it was about a year old. It was really the golden age of AI, and I had a lot of fun there. We did lots of foundational research that is still very relevant today, including RAG, but also other interesting things that may become important in the future. From there, I went to Hugging Face and then started this company. I’ve always been thinking about how we can get computers to think and reason like humans and have a real understanding—how to have proper meaning in machines.

Jeff Dance: That’s awesome. I think the future is obviously together, and I was just speaking yesterday about how important it is to connect with AI, but also to disconnect from AI as humans. The future is definitely together, and it’s amazing how much change has happened. We’re excited to have you. Before we dive into more questions, tell us a little bit more about what you do for fun.

Douwe Kiela: What do I do for fun? I hang out with my kids. Most of the time I work, unfortunately for my kids, but if I have non-working time, I like to spend it with them.

Jeff Dance: That’s great. When you have a heavy profession, you have to make time for your kids as well. That sounds like a lot of type two fun in both categories. That’s great. You’re the CEO and co-founder of Contextual AI. Tell us a little bit more about that.

Douwe Kiela: The story of Contextual is that we started it about two and a half years ago. ChatGPT had just happened, and we knew that the world would never be the same again, and that every enterprise in the world was going to want to do things with GenAI. But we also knew that the technology wasn’t quite ready and that the hard problem would really be around giving language models the right context. As humans, we’re very good at thinking, reasoning, and speaking language, but we also have a lot of context about each other and about the world. Everything that happens around us is contextual—everything is contextual, as I always say. So we knew that enterprises would need ways to take all of their data, extract the right context from it, and give it to language models so they could do something useful and valuable. RAG—Retrieval Augmented Generation, as you mentioned—is a version of that. But the broader problem we’re solving is really just: how do you give context to machines so they can provide maximum value?

Jeff Dance: Great. And your focus right now is on enterprises, essentially helping them have their own, maybe vectorizing their own databases and putting conversational AI on top of that. Is that right?

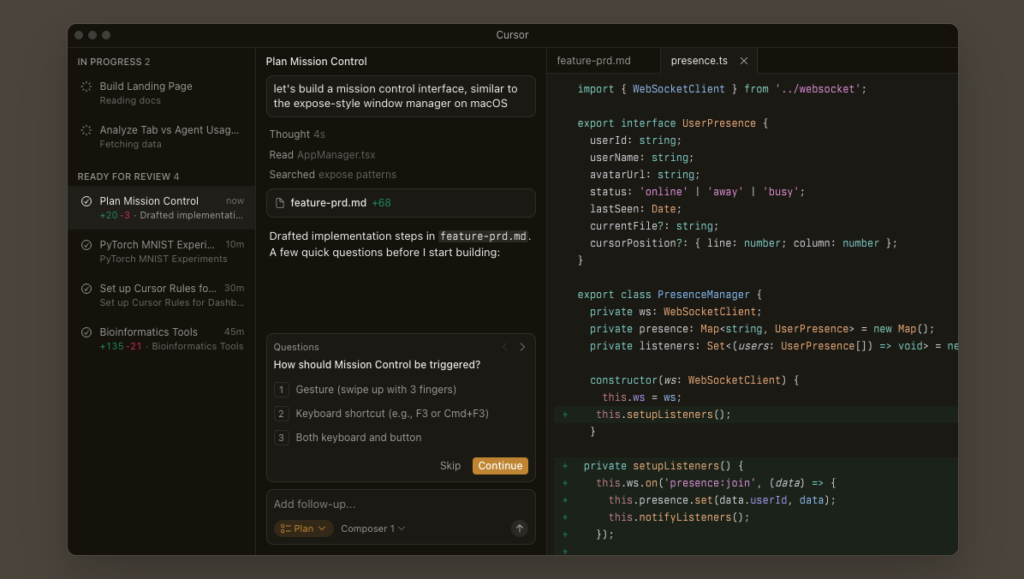

Douwe Kiela: Yes, there’s a term now that has gained a lot of traction called context engineering. People have realized that prompt engineering isn’t enough, and there’s a lot more work that goes into getting these systems to be useful. A lot of that is RAG, but there’s also tracking state, tool calling, memory, and all the things many of us are working on.

Jeff Dance: Right.

Douwe Kiela: What we’re really focused on is being a platform where you have all of your context engineering needs in one place. Especially in a large enterprise, if you’ve done a good job, you might have tens or even close to a hundred use cases where you’re using AI, and you want to get to 10,000 different problems you want to solve. You need a unified context layer to make sense of all that, because it becomes very hard otherwise. The complexity of a full agent with modern RAG capabilities is already super complex and only getting more so. Now, you need a custom architecture like that for 10,000 use cases. That means, as an enterprise, you shouldn’t be doing the plumbing for that—there are companies specialized in doing that, like us.

Jeff Dance: That’s great. With conversational AI on top, the neural base, and vectorized databases underneath, is context engineering in the middle? Is it different from just having your own language model underneath with your own information? What else goes into the context engineering or the context layer that you provide?

Douwe Kiela: A lot of it is about hooking up different data sources. If you’re an enterprise, it’s easy to build a demo on one or two documents. You build some basic RAG stuff or throw it into ChatGPT. What we’re really looking at is: if you’re a large enterprise and your data is all over the place—real-world data is very noisy, with V0, V1, V2 of a document, drafts, finals, conflicting information—you need to make sense of all the, frankly, very messy data that exists in a large enterprise. Hooking all of that to a context layer and making sure you can identify the relevant pieces of information you need—not just the data, but also the metadata and all the additional information you have about the documents and underlying data—and then orchestrating all that with an agent and language models, if it’s not agentic, to solve a real problem. Context engineering is really the middle layer between data and intelligence. It’s clear now that the future is multi-language model. We’re not just going to have one language model that does everything. We have amazing language models, specialized in different ways already, and they’re going to get even more specialized. We’ll have small language models that are much more efficient if you care about latency or cost. In this multi-language model world, with your data all over the place, you need a unified context layer to connect those pieces.

Jeff Dance: Yeah, that’s great. One of the things I’ve been thinking about and we’ve experienced is, there’s value in sitting over customer data, enterprise data, vectorizing databases, but also value in some of the other large language models. How do you bridge that? Are you at the agent layer determining which information you’re tying into? Do you tie into other data sources as well? For enterprises, that’s not a problem, it’s an opportunity—to tap into other data sources that exist.

Douwe Kiela: We let you hook up all kinds of different data sources. You can connect your Google Drive, SharePoint, Confluence, or just have a bucket of documents, or hook up structured data so we can connect to databases. The system automatically figures out if it needs to run a structured data query, like an SQL query, get results, and maybe combine that with unstructured data. In enterprises, a lot of the most valuable problems have both structured and unstructured data, and you need to combine the two to get real insights.

Integrating databases and models

Jeff Dance: Okay, so take some raw databases—sometimes that’s messy—and take the unstructured data, documents, and combine those worlds. What about leveraging other models like Claude or ChatGPT and getting other outside large language models involved? Does your system do that as well?

Douwe Kiela: Yes, we’re language model agnostic. You can bring your own language model. We also have our own, which we call our grounded language model. It’s currently number one on Google’s facts leaderboard, which measures the factuality of language models. We often work with regulated industries where you have large quantities of complex data and problems, and a very low tolerance for hallucination or mistakes. In those cases, it’s even more important to stick to the context. One of the problems with mainstream, general-purpose language models is that sometimes they think they know better than the context they’re given, and that leads to hallucination. We’ve trained a generative language model specifically focused on being very grounded in the context provided. Because we’re so good at providing the right context, we have much less hallucination than others in the industry.

Jeff Dance: That’s great. Sometimes I remind people that hallucination is probably a very human trait because we all have very distinctly different ideas. We just have to look no further than our politics and the different ideas that we have on the extremes.

Douwe Kiela: I think this whole term of hallucination—I think it’s very possible that my colleagues at FAIR and I sort of invented that term or made it into a thing. It’s actually a very bad term for it. We used to have a good name for when language models or AI systems made mistakes, and that was “mistake” or “error.” For some reason, “hallucination” became a way to describe these mistakes, but it’s always been extremely loosely defined, even what the difference is between a generic mistake and a hallucination. That’s been a bit problematic for the field. Very often, any mistake is called a hallucination, but that’s not really the right terminology.

Jeff Dance: Yeah, it’s really interesting. I think the general populace—obviously, those that are deep in this understand, but the general populace hasn’t quite yet come to understand temperature in general, or RAG as well. The idea that you can have conversational AI on more of a factual data set, which sounds like you have some specialty in, and then the whole creative side. It seems like with deep research, the engines today are trying to determine what your question is and then tilt one way or another. At least deep research, by default, is trying to be more deterministic or factual. But it seems like the latest engines are trying to determine what your request is so they can move the temperature one way or another. That seems to be more of a dynamic thing with agentic AI. I’m curious how you think about agentic AI with your platform, given that that’s the new thing this year. Maybe you’ve already been working in it for a couple of years. Tell us more about what you’re doing with multi-agent. I don’t quite grok the notion of multi-agent with RAG combined. Do I need that with RAG? Tell us more.

Agentic AI potential

Douwe Kiela: That’s a very open-ended question. First, on the hallucination thing—hallucination is basically a feature, not a bug, if you want the language model to be good for brainstorming or creative writing. But for a RAG use case, where you have to give the right answer and a wrong answer is a costly mistake, it’s not good. That’s part of the problem with general-purpose language models—they’re designed to be useful for everybody. That’s why specialization is so valuable. If you really care about accuracy, you should specialize for sticking to the context, not for brainstorming. On the agent side, I always say that agents are simultaneously the most overhyped and the most underhyped piece of technology right now. It’s very clearly going to change everything, but there’s so much hype around it that it’s confusing for people—even what an agent is and what it really means. In the RAG setting, it’s really just a model that reasons actively over its context and can take actions to manipulate that context. For agentic RAG, you have a reasoning model—a planner—doing some thinking: “This is my question, let me look in this data source. If I don’t have the right answer, I’ll try another one. Based on the answer, maybe I’ll do something else.” The fundamental difference is that static RAG is passive—you always do retrieval and then give it to the context, maybe filter or re-rank, then give it to the language model. In active RAG, the language model or reasoning model says, “I’ll look here, then do that,” so it’s active rather than passive. We’ve been doing a lot of work on that because it’s clearly much better. Our internal benchmarks show an incredible difference between the old and new paradigms. We’re enabling this for our customers. Hooking up large quantities of complex documents to such a system, especially if it’s a multi-agent system where agents are specialized for specific tasks, lets you solve incredibly hard problems. For example, we’ve been working on analyzing log file dumps—device logs that are basically illegible to humans. Our multi-agent system, with context on what’s in these log files and how to extract that context, can reason over it in an agentic way. It can do root cause analysis almost perfectly—figuring out what’s actually going wrong, even with reference logs, and providing deep investigation, plots, and figures to make sense of everything. It’s incredible technology. The companies we’ve talked to with this problem sometimes take days to analyze these log files. We do it in less than five minutes, and with almost better accuracy.

Layers of context in AI

Jeff Dance: Wow. That’s awesome. It sounds like there are multiple layers of context: the context of RAG and the prompt and maybe the temperature; the context layers you build; and having the right data underneath from a contextual perspective. Is that something you think about—getting all the context layers right for the problem you’re solving?

Douwe Kiela: Yes, context is a broad term. It’s about having the underlying context from the data, but also contextual elements in configuring the agent, like hyperparameters, prompts, and temperature settings. In our platform, you have lots of different knobs to fine-tune and capture exactly the right context you want it to have.

Jeff Dance: Right. The history of software is about inputs and outputs, but what’s amazing in the age of generative AI is that you put in a little bit of input and get so much output. Today, you can put in an input and get an entire book, presentation, website, or app. It’s producing so much, but I think in that context, we overestimate what it can do with some of our questions or problems. As companies, we’re not taking the time to get the context layers right. Because of that, we’re not always having successful prototypes.

Douwe Kiela: Very often, right? Have you seen the MIT report? Ninety-five percent of AI projects don’t get any ROI. That’s not because language models aren’t good—it’s because the context problem hasn’t been solved for those folks.

Jeff Dance: They haven’t set up the right context to solve the problem at hand. They’re throwing the engine at it, giving it the prompt, but not spending the time to get the contextual layers right.

Douwe Kiela: Yes, it’s like an engine with no wheels and no steering wheel—something like that.

Jeff Dance: Right. Maybe you need a new term to define that. We hear a lot about large language models and big data, but sometimes we just need small data or a very good small language model, with the right context layers. Like with the data logs—it’s incredible if the technology is tuned. We just need to do more tuning. Not everyone understands that, and it’s new, but it’s coming around.

Re-skilling and job evolution in the age of AI

Douwe Kiela: Yes, there are lots of interesting observations around that. You’ve probably heard that everyone in AI needs forward-deployed engineers—it’s one of the big things everyone is talking about now. It’s necessary to have these folks working alongside AI systems to get them to be good enough. What those forward-deployed engineers do is exactly what you described: tuning the context to make sure it works.

Jeff Dance: That’s awesome. Tell us a bit more about some of your experiences. We’ve talked about the current state and where enterprises are, and we’re seeing both sides—people who are successful and excited, and others who are frustrated or just starting out, with CEOs saying they need an AI strategy. We’re seeing the entire spectrum. I’m sure you are as well, helping businesses implement AI. Where do you see the industry right now in terms of adoption? It seems like we’re still in the early stages for businesses. How do you see things evolving in the next year or two?

Douwe Kiela: Yes, I think you’re right that it’s still early stage. There’s still a lot of experimentation happening. I don’t think that’s a bad thing. With fundamentally new and disruptive technology, if you’re not experimenting, you’re losing out. You just have to do it. In terms of timeline, I always say that 2023 was the year of the demo. ChatGPT had just happened, and everyone was building cool demos, thinking about what was possible. Then 2024 was about getting promoted by being the first to have a production use case—it didn’t really matter if people used it, as long as you got it in production. I was expecting this year to be the year of ROI, and that has happened a little bit. If you look at the MIT report and the outrage around it, maybe. But what’s interesting is that because agents came around and everyone is experimenting with agents, it’s almost like we’ve reset the cycle back to experimentation. This is even more powerful, so we have more time for experimentation before we need to show ROI. But that’s not going to last forever, obviously. I think next year is really the year we have to show ROI. I see a lot of folks under pressure, not just to play with AI and do something now, but to really show value from it. That’s when you care about accuracy, trustworthiness, sticking to context, and making sure you have the right context.

Jeff Dance: Yes, and from a business climate perspective, there’s a lot of focus on value right now. With the way funding and budgets are working, there’s a lot of focus on value. There’s not as much free-form experimentation or even innovation, but there’s interest if there’s value.

To your point, ROI in the next year is going to be a bigger focus, and I can see that happening. We’ve been trying to position AI from the perspective of value drivers: What are the value drivers in your business, and how do we attach AI to those? Knowing what the original value drivers are and ensuring we’re touching them, rather than just experimenting and prototyping to see what happens.

Douwe Kiela: Prototyping without thinking about value is pointless. But you need to be careful, because if you do it fully top-down and only look at numbers, it’s easy to identify the top five biggest cost centers—like your call center or customer support—and say, “We’re just going to get value there,” focusing only on cost savings. There’s nothing wrong with that, but I think the real potential of AI is much more bottoms-up than top-down. In large organizations, there are so many use cases—think of a bank and all the activities in people’s day-to-day jobs. If you can automate all of that and let people do it themselves, rather than imposing it on them, you can really revolutionize entire organizations. That’s starting to become possible.

Bottom-up innovation

Jeff Dance: That’s one of the interesting things happening with enterprise AI. For those that have top-down support but allow bottom-up experimentation and innovation, there are hundreds of agents being built and shared. I think that’s ideal. Even at our company, we’ve created over 290 agents and published 112 for each other. We’ve heard from others that if they’re deploying and allowing people to experiment, they’re tailoring to all their unique workflows. If 50% to 80% of our costs are people doing knowledge work, and those people can augment themselves, it’s not just one big thing—it’s dozens or hundreds of small things that add up to collective efficiency.

Douwe Kiela: Exactly.

Jeff Dance: You mentioned costs, but it’s not just about costs. Were you also referring to revenue? Do you have thoughts on that?

Douwe Kiela: People are trying to use AI to make their organizations more efficient, which reduces overall cost. But if you only think about it that way, you’re missing out on opportunities for new revenue generation. You really need to think about both.

Jeff Dance: I think it’s easy to see from an e-commerce perspective, because e-commerce companies have transactional revenue and can quickly see the impact of AI features in their data. But I think the pattern—

Douwe Kiela: Yes, that’s about content generation. In e-commerce, it’s easy to show the value of AI for generating new revenue because you’re optimizing for clicks, SEO, or similar things. But the more interesting question is: What happens when you apply AI to a large financial institution or a deep technology company? The opportunities for new revenue generation are further removed, because the process is more complex—how a product comes into existence and is monetized over time.

AI potential in banking

Jeff Dance: I agree. My point is that what’s happening with e-commerce and those transactional results can apply to new businesses as well. We’re just generating new ways of adding revenue, often building on what we already have. I like your example of banking; that’s another transactional area where the ability to see value could be pretty quick. What other categories of businesses have you seen success in? Are there patterns in industries or functions where you’re seeing early adoption and success?

Douwe Kiela: The functional angle is an obvious one, and many started there. In the year of the demo, everyone was building demos on HR documents and asking, “How many vacation days do I get?” Those were nice demos, but the value was essentially zero—you could already look up your vacation days elsewhere. The most interesting applications are for complex problems on complex data, where you need highly trained humans to do complicated things. That’s what we’re focused on: sectors like financial services, deep technology (semiconductors, chemical engineering), or professional services like legal and tax. These areas have lots of complex data, and you need to extract the right context and reason through it to generate new insights—not just extract information, but provide something valuable, like analyzing root causes in log files or giving specific tax advice.

The future of AI disruption

Jeff Dance: So you’re tackling bigger problems where there’s high value. You’ve been in this from the beginning and chose your educational path wisely for everything to come together as it has. Looking to the future, where do you see things evolving? Maybe three or five years out—though it’s probably hard to predict ten years from now.

Douwe Kiela: Thinking ten years out is impossible. Five years is impossible too. Even three is hard; I can barely predict what’s going to happen in the next six months. The rate of change is so fast, and I think people are underestimating the disruption this technology is already bringing and will continue to bring. Even if you freeze language model technology—let’s say GPT-5 is the standard—and just focus on productization and solving the context problem, you can still have massive economic disruption. And we know language models are improving every few months. People don’t really understand what’s happening. You can see it with developers—coding is not the same profession anymore. That disruption will extend to many white-collar and back-office jobs. That wave is coming; it’s at our door right now. My optimistic take is that people will become much more productive, with AI augmenting rather than replacing them. For the most important and valuable problems, you’ll want to keep humans in the loop—at least for the next few years. But with the current rate of change, a lot of the more boring activities can be done by AI, freeing up our time to be human.

Jeff Dance: That’s the history of technology: we try to get rid of the manual, dull, dirty, dangerous tasks. That’s also the promise of robotics. Some of the deepest roboticists believe the future of robotics is to help humans be more human—to leverage our greatest strengths. I think there’s a parallel with AI and with the history of technology, from the printing press to the computer to the spreadsheet. We always thought these things would replace people, but they just changed work and often created more work. Now we have four knowledge workers for every manual laborer. Where do you see things shifting from a work perspective, especially in terms of re-skilling and new job creation versus jobs going away?

Douwe Kiela: If you’re not re-skilling with AI now, you’re really missing out. There’s no excuse not to. But at the same time, the impact doesn’t have to be about replacing human activity. The spreadsheet is a good example—it just took care of stuff that was annoying to do manually. People often ask what their kids should study for the future, since it will look so different. I always tell them that the one skill that will remain extremely important is the ability to concisely and precisely articulate thoughts—basically, language. How do you tell a language model exactly what you want? If you want to be good at that, I highly recommend studying philosophy or English literature.

Jeff Dance: We’re getting back to fundamentals—the human fundamentals: good communication, problem solving, problem framing, precision.

Douwe Kiela: Exactly. Mathematics is another good example of that. There’s a quote from Jensen Huang at Nvidia: “Every job will be affected by AI, and pretty much immediately, but you’re not going to lose your job to an AI—you’ll lose your job to someone who uses AI more effectively than you.” That’s why it’s so important to upskill and learn to articulate exactly what you want AI to do for you.

Empowerment through understanding

Jeff Dance: I try to remind people: you need to engage and learn. Humans still have an edge over artificial intelligence—it’s still artificial. We have trust, connection, values, senses, emotions, humility, the ability to envision the future, morality, and genuine emotion. We create meaning and have moral judgment and intuition. Relationships matter. But we all fear what’s new, and in the absence of knowledge or understanding, we assume the worst. To get away from that anxiety, people need to lean in and understand, so they can be empowered. This is just another tool that allows us to create, and creation is one of the greatest human capabilities. We’re now super creators with these tools, able to be much smarter at anything we do.

Douwe Kiela: I hope that with this technological reset and the economic disruption that comes with it, we also use it as an opportunity to put our social lives in a more prominent position again. Many things that make us uniquely human are about our interactions with other humans. Hopefully, as AI frees up our time by doing the boring stuff, we spend more time doing social things with others, rather than being glued to a screen consuming AI-generated content. Call me an optimist.

Jeff Dance: That’s a good promise to lean into. The proliferation of smartphones has had consequences for us as human beings. We have our heads down more, rather than up and engaging. You talked about spending time with your kids and how we engage with others and create together. Hopefully, we have more time for that. With AI and its conversational interface, we can talk to our computers now. I hope we’ll be more heads up and context-aware, rather than heads down looking at screens. We’ve narrowed our focus with mobile phones, and I’m hoping, like you, that we can unhitch ourselves from these small screens. My hope is that AI will help us do that.

Douwe Kiela: I like that.

Jeff Dance: From an ethics perspective, just a few more questions. With smartphones, we just let the technology change us without really thinking about it. Now, 15 years later, we’re reconsidering. Parents see this with their kids—my kids’ first ten words included “Alexa.” Can you think of any principles or ethical guidelines to consider, so we don’t make the same mistakes? I don’t think we’ve done smartphones right as human beings.

Douwe Kiela: It’s a fascinating question. I wish I had a good answer. The risk with trying to impose constraints on innovation is bigger than you think. If you try to do everything top-down, you might kill innovation, even if it comes from a good place. I’m European, and I get frustrated by the European government’s focus on premature regulation, which can make it harder to innovate. I’m more in favor of innovating as quickly as we can and trusting society to figure out, over time, the right way to interact with new technology. That’s what we did with the internet—it was chaos at first, and now it’s a bit more organized. The same is true with mobile phones; now we have parental controls. The same will be true with AI. When you have new technology, you need to encourage innovation first, then figure out how to use it appropriately. We’re lucky to be alive in these interesting times.

Jeff Dance: I agree. AI is like a young stallion—we’ve let it run free, but how do we harness it and make sure it’s used for good? Technology can go either way; it can enhance or hurt our relationships, creativity, and knowledge.

Douwe Kiela: That’s true for all technology. Sometimes people think it’s uniquely true for AI, but should we regulate browsers because people can visit illegal websites? That doesn’t make sense. You shouldn’t regulate the technology itself, but rather its application.

Jeff Dance: So it’s more about the principles, not at the raw technology or government level, but at the business, family, or parenting level. It’s about context—how we guide from that perspective. Everything is contextual. Awesome. Let’s end on that note. Douwe, thanks so much for being with us. I really appreciated your perspectives, expertise, and passion.

Douwe Kiela: Everything is contextual. Thank you.

Jeff Dance: Thank you for all your insights.